One of the key features and advantages of virtual reality (VR) technology is that the user gets a better sense of scale and depth compared to looking through regular monitors and projectors. Generally people who experienced VR models in a correctly set up Head Mounted Display (HMD), such as the Oculus Rift, report that they feel the virtual world is at the right scale. For architects and other design professionals, getting an accurate sense of scale, depth and volume of space is fundamentally critical. IrisVR delivers premium VR experience for architects and other AEC professionals in general. We are not content with users’ qualitative feedback alone; we want to take a scientific approach to verify that scale in VR matches real world scale. So we designed and conducted two experiments to find a way to “project” the virtual world into real world. We then are able to measure the virtual distance and rotation in physical real world and see if they match or not.

Experiment One: Leap Motion Distance Tracking and Measurement

Introduction

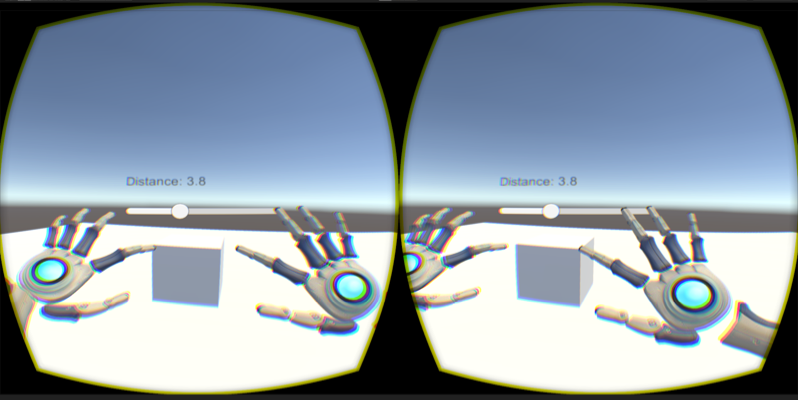

Leap motion is a hand tracking device that tracks user’s arm, palm, and fingers. We set up leap motion to work with Oculus Rift in Unity so that user can see his hands virtually in Oculus Rift. The idea is if the user sees a box in virtual world, and if he reaches out to touch the edges of the box with his index fingers, by measuring the distance between his virtual index fingers, we obtain the width of the virtual box (See Figure 1). Meanwhile, if we measure the distance between the user’s fingers in real world, we get the width of the box in real world. By comparing the two measurements, we can tell if the box in VR is scaled correctly.

Figure 1 Touching the edge of a box in virtual world to measure the box dimension

Hypothesis

The measurement of distance in real world should match the distance in virtual world.

Experiment Setup

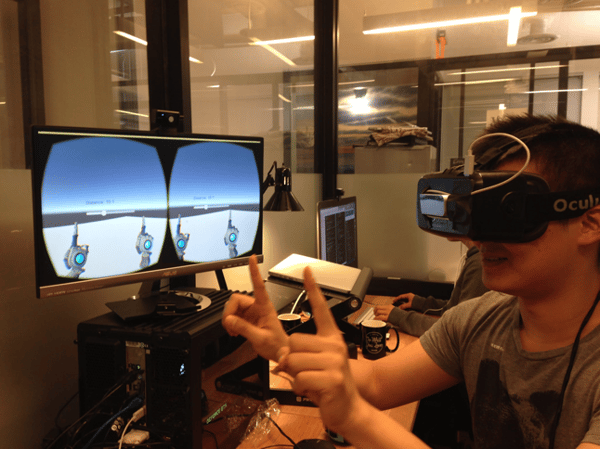

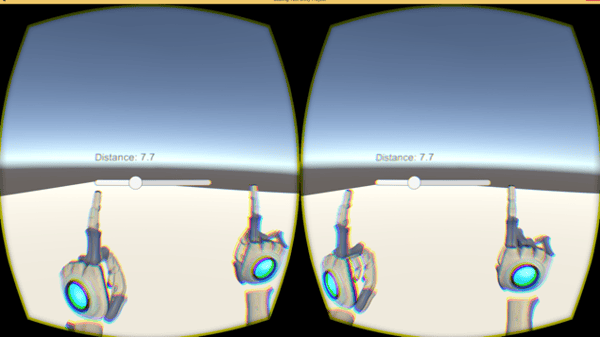

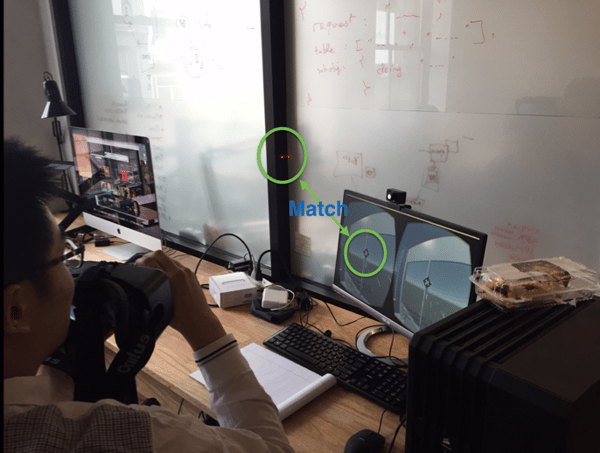

We mounted the leap motion device on the center of Oculus Rift, so that it can track user’s hands from the perspective of the user’s eyes. Figure 2 and Figure 3 show the experiment setup. In Figure 2, the user holds up his index finger in front of his face. The leap motion tracks his hands and projects them into the virtual world, as shown on the monitor. The distance between the virtual fingers is shown in the virtual world too so the user can spread his hands to particular distance (See Figure 3).

Figure 2. Experiment Setup

Figure 3. Leap Motion tracks virtual hands

Experiment Process

We recruited five participants for this experiments. Each participant is asked to put their index fingers apart at certain distance. Then a measurer will measure the distance between the finger tips. To reduce the error related to measuring and tracking, we measured several different distances at 1”, 2”, 4”, 8” and 12”.

Results

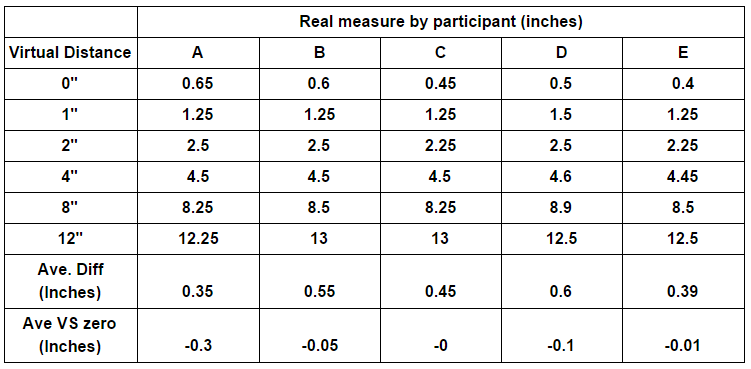

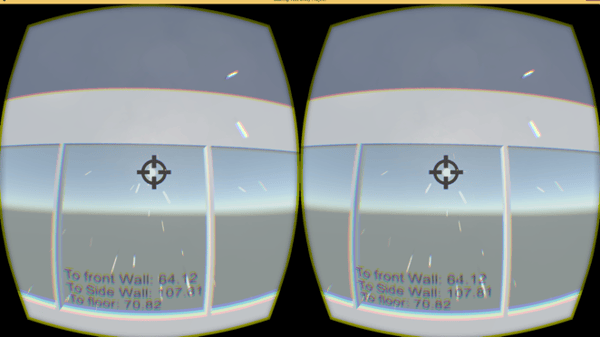

The results of the experiment is listed in the table below:

Table 1. Record of measurement

In the table, “Virtual Distance” is the distance in virtual world, and “Real Measure” is measurement in physical world with tape measure. The real measurement is rounded to the closest quarter inch. The 0” measure is taken while participant put his index finger together. This 0” measurement is used to benchmark the tracking system accuracy/error. The “Ave.Diff” row gives the average difference between the physical and virtual distance for a participant, excluding the 0” measurement. The “Ave VS zero” row is calculated by deducting 0” measurement from the Ave. Diff.

It is pretty obvious by looking at the “Ave VS zero” row that the physical measurement is very close to virtual distances, as most measurement has accuracy within 0.1 inch. To obtain an objective assessment, we leveraged statistical analysis tool and ran a two tailed pairwise t test for the measurement of 0” and “Ave VS zero”. See table below for detail.

Table 2 Two Groups for two tailed pairwise t test

The The two-tailed P value equals 0.4794, which means the 0” measure is not significantly different than Ave VS zero value. In other word, statistical analysis proves our hypothesis that the measurement of distance in physical world matches the measurement in virtual world.

Conclusion for Experiment One

We designed this experiment to project distance/size in virtual world into physical world using leap motion. By measuring the physical distance between participants’ fingers, we are able to measure virtual distance or size of virtual objects in physical world. Our experiment data shows that virtual distance or object size measured with the Leap Motion corresponds to the same distance or size in physical world. In another word, using HMD, such as Oculus Rift, one can get an accurate sense of scale that matches the perception in physical world.

Experiment Two: Laser Pointing Rotation testing

Introduction

In experiment one, we tested and proved that sense of scale is one to one in virtual world and physical world. The next question our scientific minded IrisVR engineers wonder is when wearing a HMD, does the head rotation in virtual world match the rotation in physical world? If so, we can then conclude that exploring virtual world wearing HMD gives you a realistic perception that is spatially similar to the real world.

Hypothesis

When wearing HDM, head rotation in physical world matches the head rotation in virtual world.

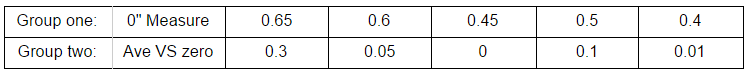

Experiment Setup

We modeled our office space in SketchUp, and then ported it into VR. We defined a location in our office space and put the VR camera at the same point in the virtual space. Figure 4 shows how we align the head in real and virtual world. We measured the distance from head to left wall, front wall, and head height. With those measurement as coordinate, we can pinpoint the position of the virtual camera to match the position of HMD in physical world.

Figure 4. Experiment Setup

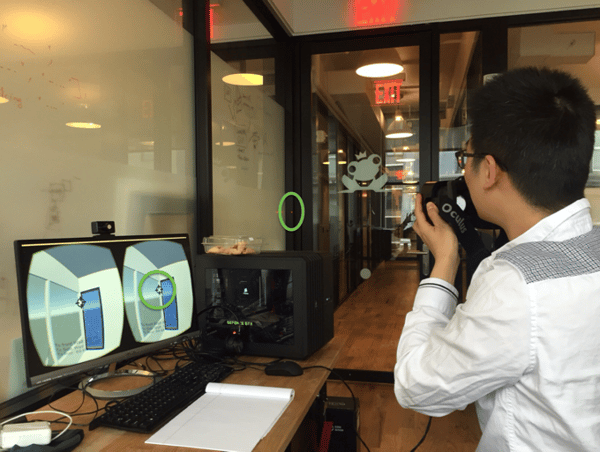

The measurement device is made of an Oculus Rift and a laser distance measurer (See Figure 5). The laser distance measurer is mounted on the center of the Rift pointing away from the Rift. We can then turn on the laser distance measurer to make it shoot a ray, which aligns with user’s sightline. Through where laser hits, we know where the user is looking at in physical world.

Figure 5 Measurement Device

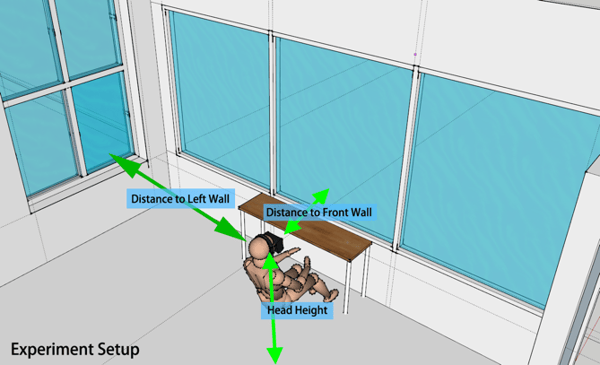

In the virtual world, we put a cross hair at the center of the virtual camera. The virtual camera also shoots a ray from its center outwards, which can be thought as the virtual representation of the laser. Figure 5 shows the the virtual world setup. The virtual ray shoots out through the cross-hair. When the ray hits an object, it will turn into a spark to give the user a clear sense of where the ray hits. The spark can be thought as the virtual representation of the red dot of the laser. The VR model continuously calculates the distance from the virtual camera to the left wall, front wall, and floor. The measurements are shown at the bottom of the screen so that user can adjust their virtual position to align the virtual head to physical world head position.

Figure 6 Screenshot of VR model

Experiment Process

We first have the participant sit at a particular location in the room. We then adjust the virtual camera position to make sure the virtual camera is at the same place as the HMD. We defined two points in the room, with one point to the right of the participant, and the other point to the left of the participant. We picked points that are at corners so it is easier for the participant to aim. We then turn on the laser measurer and have participant aim at the two points in virtual world. We then take note on if the laser hits the same point as the virtual ray.

Results

As shown in Figure 7, the laser hits the same point as the virtual ray in the VR model. The participant then rotate to the right and aim the virtual ray to the second point, which is the corner of the room. As shown in Figure 8, the laser hits the expected position. This result proves our hypothesis that the rotation of the HMD in physical world matches the rotation of virtual camera in VR models.

Figure 7 Experiment Result 1

Figure 8 Experiment Result 2

Conclusion for Experiment Two

Through creating an identical virtual representation of the physical world, we are able to prove that when wearing a HMD in a VR model, the user’s head rotation in physical world can be accurately reflected in the virtual world.

Overall Conclusion

The two experiments verify the feedback we get from many VR users, that they get a true sense of scale and space in virtual reality. Using a HMD, a user’s rotation and movement in physical world will be accurately reflected in virtual world. For AEC professionals, especially architects, it means that when they use VR to visualize their design, they can truly inhabit their design long before a single brick has been laid.

Remaining Questions:

While we verified two of the most important factors in VR, i.e. rotation and scale, are mapped to physical world in 1 to 1 ratio, there are more tricky parameters that impact user's perception. Inter-Pupillary Distance (IPD), distance from eye to VR display, the presence of eye tracking, or even whether the user is standing or sitting will all play a role in shaping user's perception to VR world. Therefore the next question we need to answer is how do we measure the interaction between those parameters and user's subjective feeling. We will leave this question open for the future.

.png?width=212&name=Prospect%20by%20IrisVR%20Black%20(1).png)